(Dobbs) Abusing Artificial Intelligence: There Are No Silver Bullet Solutions.

We have to detect its evil uses among the good ones.

When I was senior correspondent for Mark Cuban’s all-high-definition television network HDNet, I anchored the Democratic National Convention at the Fleet Center in Boston, then the Republican National Convention at Madison Square Garden in New York. If you’d been watching (which few were), you’d have seen me behind my anchor desk high above each arena, with politicians and delegates and lots of balloons swirling on the convention floors behind me.

Except I wasn’t there. I was sitting on a tall stool— not even behind an anchor desk— at HDNet’s primary production studio in Denver. But you would not have known. What you were seeing was real, and in real time: the politicians, the delegates, even the anchor desk. We simply weren’t all in the same place. If I hadn’t said on each broadcast that I was actually reporting from Denver, you would have had no way to know otherwise.

But that was then, 2004, the age of innovative video overlays, which seemed at the time like pretty hot technology. And this is now, 20 years later: the age of artificial intelligence, more commonly abbreviated to “A.I.” It makes television technology from 20 years ago seem like child’s play, and in terms of both simple use and easy access, it’s coming at us at mach speed.

That’s both good and bad. The good parts are already with us.

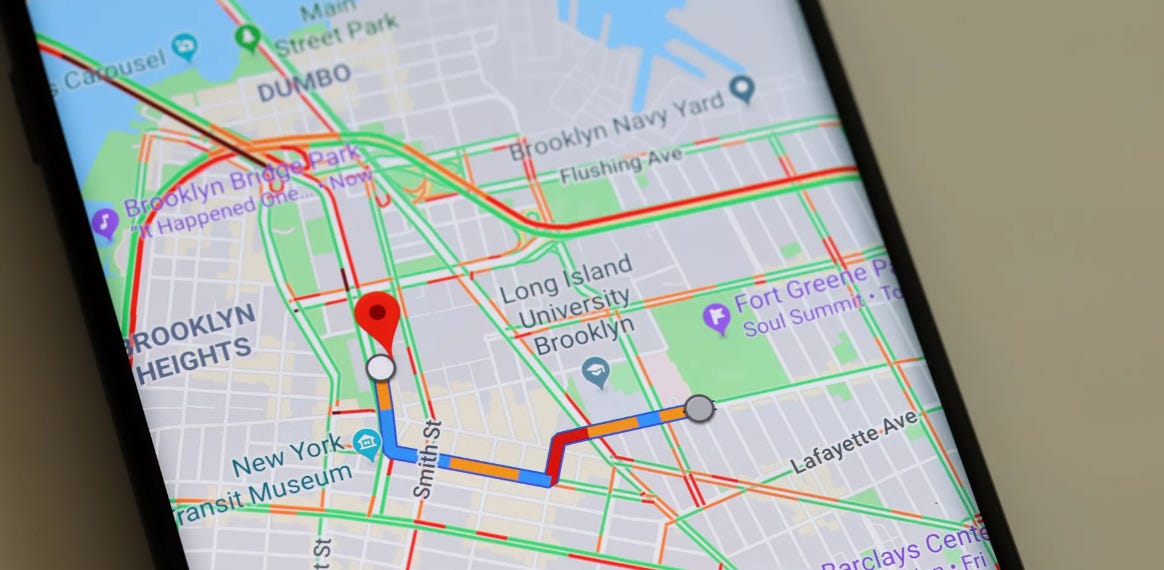

Google Maps, with realtime routing suggestions, uses A.I. So does the home page on Netflix that offers entertainment suggestions compatible with what you’ve watched in the past. Likewise (although not always so good), those pop-up ads on your computer screen, reflecting some previous search you’ve made. And self-driving cars, which compile the conditions all around you and guide you, hopefully, to your destination. Even those vacuum cleaners that learn their way around a home, covering every corner without discreet commands, are a form of A.I..

But A.I. doesn’t have a mind of its own. In the simplest terms I can figure out to explain it, A.I. is the lightning-fast compilation of digitized data from every conceivable source to find a solution— from driving to marketing to vacuuming— without additional human input.

But high-tech specialists have been using A.I. to create those things for us because we couldn’t use it ourselves. Until now. Just three months ago, the leading commercial company in the field, called OpenAI, made artificial intelligence accessible to anyone who wants to try it. Then Microsoft, in partnership with OpenAI, made it available on its Bing search engine. The Chinese search engine Baidu is soon following suit. Google is not far behind.

What this means is, instead of just searching on the internet for one piece of information at a time and eventually combining the searches to come up with the solution we need, we can ask an A.I. program to do the heavy lifting without a specialist in sight. An easy example comes from Clare Duffy, a writer with CNNBusiness, who says, “I asked Bing to write me a five-day vegetarian meal plan. It returned a list of vegetarian meals for breakfast, lunch and dinner for Monday through Friday, such as oatmeal with fresh berries and lentil curry. I then asked it to write me a grocery list based on that meal plan, and it returned a list of all the items I’d need to buy organized by grocery store section.”

In the fields of healthcare and education, science and law, business plans and vacation plans, the implications are exciting. The information’s already out there, A.I. just puts it into usable formats, and fast.

But like I say, that’s the easy stuff. Thanks to public access to A.I., with just a few short instructions we can actually use our own computers now to write essays, to compose music, to produce art. It’s called “automatic content creation.”

For example, as part of a joint study by Georgetown University’s Center for Security and Emerging Technology, the Stanford Internet Observatory, and the company OpenAI, someone fed the following words into Google’s A.I. to see what it would come up with: “A raccoon wearing formal clothes, wearing a top hat and holding a cane. The raccoon is holding a garbage bag. Oil painting in the style of Rembrandt.” That’s it.

And this is what they got.

Likewise, you can tell an A.I. program to write a birthday song for a friend, and you can ask for it in the style of rock, the style of rap, the style of soul, the style of opera. And did you watch Sunday’s Super Bowl? An ad in the first half for E-Trade featured talking babies whose lips absolutely matched the adult voices that came from their mouths. A.I.

But here’s where the trouble starts. No matter what it’s creating, A.I. is drawing from existing data, which sometimes means drawing from resources someone else owns. Even more troubling, it can use those resources to create content out of whole cloth. The Georgetown/Stanford/OpenAI study illustrated the technical progress of A.I. by displaying eight faces, all compiled from real human faces but none of them real themselves.

These implications are more frightening than exciting, because when it comes to manipulation, to disinformation, the possibilities are endless. For example, in an A.I. field called “deepfake videos,” troublemakers created a video that seamlessly superimposed Michelle Obama’s face on the gyrating body of a porn actress who was stripping. In another, you could see and hear Ukrainian president Zelensky telling his troops to surrender to Russia.

These things can be produced by sovereign states out to influence public opinion, they can be produced by lone wolves out to smear someone they don’t like.

Then there is the issue of writing by A.I.. Feed in the right words— like they did to get the picture of the raccoon— and in mere minutes you can produce an essay that proves that Bill Gates is tracking all of us with the microchips he put in our Covid vaccines, or that there really was no WW2 Holocaust. As columnist Maureen Dowd recently asked, “Once A.I. can run disinformation campaigns at lightning speed, will democracy stand a chance?”

I got together the other day with one of my best friends from growing up, Rick Levin, who for 20 years was the president of Yale. I asked him what leaders in the field of education think about A.I., which perfidious students, a.k.a. students who are willing to cheat, can now use to write their papers. His answer was, “They’re scared stiff.”

But he also told me, “They’re working on it.” And they are. OpenAI just put out a tool to try to distinguish between what a human would write and what a computer would write. What it does is hunt not just for hidden markers like digital signatures, but for mistakes with the use of logic, grammar, phrasing, and other errors that a human would be unlikely to make. However, while no doubt the tool will get better, OpenAI currently concedes that “it is impossible to reliably detect all A.I.-written text.” Right now, it’s only catching just over 25%.

That Georgetown/Stanford/OpenAI report offers a sobering conclusion about the manipulation of A.I. for the wrong purposes: “There are no silver bullet solutions.” But at the same time, it also offers suggestions to mitigate disinformation and other negative uses of A.I. One is for A.I. developers to build systems that are more “fact-sensitive.” Another is to require what they call “proof of personhood” for A.I. users. Yet another calls for deeper collaboration between A.I. developers, government agencies, and social media companies.

However, even those ideas have their limits. The technology is changing so fast that it’s almost impossible to anticipate A.I.’s next generation. What’s more, those who will use A.I. to destabilize everything from business to politics to education eventually will build their own systems, which won’t be subject to anyone’s controls.

The train has left the station and there’s no stopping it. Artificial intelligence will be used for good but as we’ve seen with the evolution of the internet, it also will be used for evil, which means that ultimately, it’s up to each of us to figure out what’s fact and what’s fiction, what’s real and what’s not. It’s important to understand though that some of what we see, even if generated by A.I., is still true.

Over almost five decades Greg Dobbs has been a correspondent for two television networks including ABC News, a political columnist for The Denver Post and syndicated columnist for Scripps newspapers, a moderator on Rocky Mountain PBS, and author of two books, including one about the life of a foreign correspondent called “Life in the Wrong Lane.” He has covered presidencies, politics, and the U.S. space program at home, and wars, natural disasters, and other crises around the globe, from Afghanistan to South Africa, from Iran to Egypt, from the Soviet Union to Saudi Arabia, from Nicaragua to Namibia, from Vietnam to Venezuela, from Libya to Liberia, from Panama to Poland. Dobbs has won three Emmys, the Distinguished Service Award from the Society of Professional Journalists, and as a 36-year resident of Colorado, a place in the Denver Press Club Hall of Fame.

greg, very good assemblage and coverage on an important subject. john

"The skies the limit" and imagine what an AI hacker could do to the world's ICBM launch computers.

If Putin could not deploy his nuclear armed rockets, made so by a sophisticated AI interruption, he would be forced to change his tune regarding atomic threats.